Typical configuration

4 GPU AI Training Server

- cpu

- AMD EPYC 9654 (DDR5 ECC)

- gpu

- 4× NVIDIA H100 (PCIe)

- ram

- 256GB DDR5 ECC

- storage

- 4× NVMe U.3 (3.84TB)

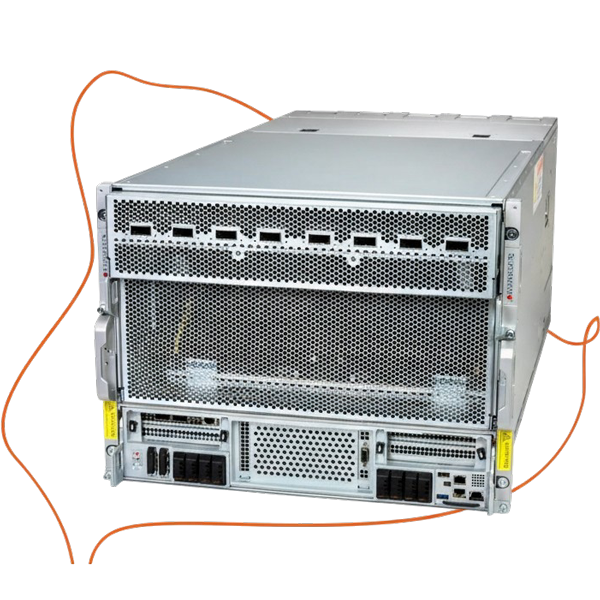

Enterprise rack servers

Built for AI training, inference and high-performance compute.

GPU rack servers tuned for multi-accelerator training and inference. PCIe lane budget and NVMe scratch staging reduce GPU wait time; power and cooling sustain utilisation.

Experts in configuring GPU Rack Servers

Supports PCIe Gen4 / Gen5 for AI Training, Machine Learning to keep GPUs fed and raise throughput.

Validated Up to 8× GPUs against power and thermal envelopes for sustained utilisation.

Optimised NVMe storage support for AI Training, Machine Learning to cut staging latency and keep accelerators compute-bound.

Aligns DDR5 ECC memory bandwidth with batch throughput to avoid CPU bottlenecks during AI Training, Machine Learning.

Engineered Redundant PSUs and cooling headroom to hold thermal and electrical margins under sustained load.

Cuts step time by keeping multi-GPU training compute-bound during ingestion and gradient exchange.

Improves inference throughput with low-latency batch scheduling across nodes.

Reduces render latency by sustaining GPU pipeline saturation for VFX and 3D workloads.

Improves parallel job throughput by keeping accelerator memory and I/O paths fed during long runs.

PCIe bandwidth & expansion

Supports PCIe Gen4 / Gen5 lanes and slot topology to minimise interconnect stalls and raise throughput.

GPU support & density

Provides Up to 8× GPUs expansion with sufficient power and thermal headroom for sustained utilisation.

Storage architecture (NVMe)

Uses NVMe storage support to reduce staging latency and improve checkpoint write bandwidth.

Cooling & power considerations

Engineered Redundant PSUs and cooling headroom for stable thermal and electrical margins under sustained load.

Representative configurations — every build is tailored to your workload and environment.

Typical configuration

Typical configuration

2-GPU nodes for inference and small training runs with stable thermals.

4-GPU systems that balance PCIe expansion, power, and sustained throughput.

8-GPU chassis sized for dense deployments and sustained utilisation.

Share your workload requirements; we spec, validate, and quote to deployment constraints.